Here’s my thread explaining why I left, and why I just might not bother ever going back:

The Smile Position Paper

A Thought from a Tweet

This morning, I bumped into Paul Ford’s quote, and it was so brilliant that I just had to expand on it. In the design of Smile I’ve thought about this for years — what matters in computation, and have I made the language focus on that? — but I think it’s worth talking about it as a position paper. Ford hits the nail on the head: Smile has an opinion, and it’s a strong opinion, just one that’s very different from most languages in use today.

Continue readingComments Off on The Smile Position Paper

Filed under Uncategorized

Syntax matters

Something that I haven’t really explained well is why I believe Smile code is shorter, better, and simpler than nearly everything else out there. I’ve talked about it at a high level, and I’ve shown “Hello, World” and simple test programs, which are nice, but none of that really gives you the feel of coding in Smile.

So here’s an example of some unit tests written using a simple unit-test suite that I’ll be including out-of-the-box with the Smile distro. Writing unit tests is a little closer to the day-to-day activities of Real Programmers, and it helps you to see a little better what code in a language feels like:

#include "testing"

tests for the arithmetic unit {

it should add small numbers {

x = 5 + 7

assert x == 12

}

check the boundary conditions {

x = 5 + 7

assert x != 0 (adding positive numbers should never produce zero)

}

}

Filed under Uncategorized

I’m still doing things

It’s been a while.

The credulity of my tagline — where I’m still writing but you’re not reading — seems to be a bit stretched lately, as it’s been months since I last posted anything about anything. That’s not for a lack of interesting things to write about, but mostly for a lack of time in which to do it: I most often think of things to say while driving to or from work, or while lying in bed at night having spent my evening feeding and cleaning up after small children, and neither scenario is especially conducive to posting on one’s blog. Even when I do get a little private time, I tend to spend it working on Smile, rather than posting here about Smile, which doesn’t especially help with anybody else knowing what’s going on with it.

So let’s talk about Smile.

Filed under Smile, Technology

Downfall

Downfall. 2236, Pogo Publishing, Lagos, Nigeria. 577 pages.

It goes without saying that the collapse of America was the defining event of the 21st century.

Historian Warren Keeler’s new book, Downfall, explores the collapse of the world’s most technologically-advanced empire in breathtaking scope, and while it leaves some to be desired — I found the scant mention of the Harriman Riots disappointing — it is still among the most comprehensive analyses of the collapse to date.

The core outline — that poorly-educated, rural Americans were taken in by a fast-talking real-estate mogul who became not only America’s last President but its first Dictator, ultimately driving the nation to despotism and ruin — this story is well-known to any schoolchild today. Still, despite all of the analyses of the rise and eventual fall of the Trump Dynasty, few attempt to trace its origins farther back than Donald Trump’s rise to power in 2016. Keeler’s book fares excellently in this time period, chronicling not only the rot in the Democratic Comradeship that ultimately led to the failed candidacy of Hillary Crookton, but also the weaknesses in the Strong Republicans For Strength Party that allowed Trump to rise in the first place.

Keeler also excellently covers the pivotal first three years of Trump, long before he became Grand Commander. I had not realized, for example, that the retirement of a single Justice from the American Ultimate Court — then called the Supreme Court, apparently — was what ultimately led that Court to rule in favor of abolishing term limits on the American President. It is also interesting to wonder what might have happened had the election of 2018 swung the other way: Perhaps the American Legislature might have been a better check on Trump’s ambitions; with just one more Democratic vote to tip the balance toward his opposition, he might not have been able to run roughshod over the other two branches of government.

But, of course, history did not play out that way. The Legislature quickly became Trump’s rubber stamp; laws were passed to silence all information except Foxnews and Trumpitter; and by the time Democratic leaders began to disappear at the hands of ICE, his private army, even his original supporters were too terrified to stop him. The Battle of New York City — then simply called “quieting the insurgent rats” — was the final fight between Trump’s Blackshirts and the Hashtag Resistance, and it put an end to any attempt to stop his seizure of power. The collapse of the American economy during the subsequent Deportations — his epithet used to describe the murder of his opposition and critics — was almost inevitable.

In the wake of Donald Trump’s reign, and the failed dictatorship of his son, nearly a hundred million people died. And, of course, America is ruined, its landscape now badly irradiated: Trump’s decision to use his nuclear weapons on California is rightly denounced not just today but was even denounced by some of his supporters at the time. Yet Keeler does not attempt to place judgment on either Trump or his family: True to his trade, he is a dispassionate historian, chronicling the facts as accurately as he can from the remaining records, many of which were lost in the collapse of the internet. I would have liked stronger opinions from Keeler on the worst of Trump’s atrocities, but I understand his desire to remain objective.

Ultimately, of course, without the collapse of America, the rise of the African Empire would have been unlikely. And while our continent is strong and wise and capable, there are still technological and artistic wonders that America’s world knew that we are yet rediscovering. One cannot help but wonder what the alternative history of Earth might have been — had the American people put up a little more of a fight against the greatest despot and mass-murderer mankind has ever known.

— Jax M’nungo

Comments Off on Downfall

Filed under Uncategorized

Wow, where have I been?

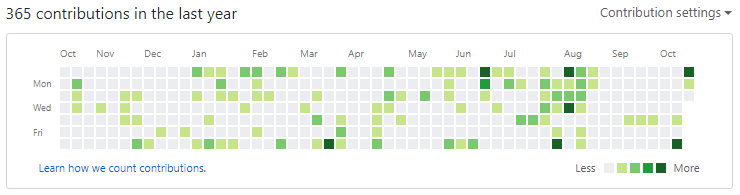

I just realized I haven’t posted anything really serious about Smile in all of 2017. I’m definitely overdue for a status update! There have been over 360 commits in it this year, more than one commit per day (on average).

Comments Off on Wow, where have I been?

Filed under Uncategorized

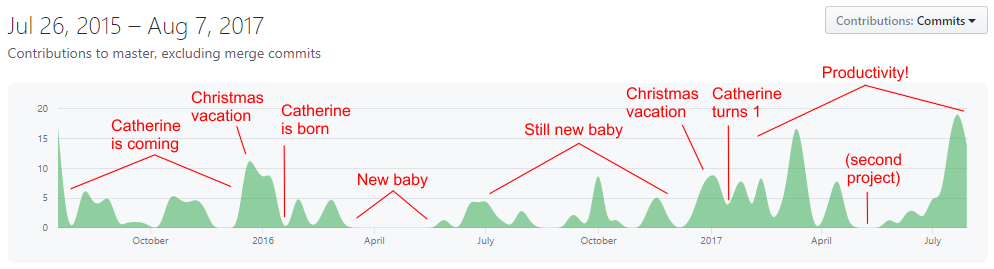

Life, as a story told in Git commits

Coders who are parents will know.

Comments Off on Life, as a story told in Git commits

Filed under Uncategorized

After Further Review…

I’ve spent a little time in the Stack Overflow review queues as of late, trying to do my civic duty by helping the programming community, and I’ve learned a few things: Continue reading

Filed under Uncategorized

Some thoughts on Islam

There was a time that I was mostly innocent of knowing anything about Islam.

I remember learning about Islam in school in the ’80s; it was a religion practiced by a bunch of people in parts of the world I’d never been to, lumped in my mind with Hinduism and Buddhism and Shintoism into the category of “other weird religions.” My, how times change.

The only real interaction I had with Islam was over the Israel/Palestinian crisis. I am unabashedly a supporter of Israel. That’s what being half-Jewish gets you, really. I’m not a hard-liner about it, but Israel has a right to exist, and I viewed the Islamic world’s hard position on Israel and a lot of the PLO’s actions in the ’90s with extreme skepticism. The Israelis needed their home, and the Palestinians, well, they were Arabs and had an entire Middle East to live in: They didn’t need that tiny, tiny strip of land.

Then 9/11 happened.

I remember watching the towers fall. I was an adult, twenty-five years old, standing in a bathrobe in front of a TV trying to make sense of the impossible pictures and death. It was like a bad action movie, only it was real, and those dots falling out of the collapsing towers were real, live human beings, plummeting to their deaths. My father was in Washington, DC during the attacks, and watched the third plane strike the Pentagon out the State Department window. And then the last plane crashed out in Schwenksville, its valiant passengers daring to try to do what was right, and etching their names in the pantheon of American heroes.

And I remember very distinctly watching, on that very same day, video of Palestinians dancing, dancing, dancing, and cheering in the streets. Celebrating the deaths of another man. And I thought to myself — regardless of religion — who does that? Who dances when another man dies?

In that moment, Islam became a dirty word.

Good men don’t dance in the streets when their fellow men are murdered. Therefore, these men I see cannot be good.

In five seconds of video, Islam went from a “religion of peace” to an absolute evil. The Palestinian claim to Israeli land? Forfeit, null, and void. The Arabic claims of mistreatment at the hands of Americans? Voided. The claim that Islam was on equal footing with all other religions? An absolute falsehood.

I was then supportive of efforts to seek out Bin Laden. The perpetrators of that evil had to be found, and stopped. And while I tried hard to be reasonably fair, it was impossible for me to give Islam an equal shake with every other religion. It had perpetrated an unfathomable evil, and though there were those who claimed it was still a religion of peace, that the Wahhabists who wrought that terrible crime were unsupported by the majority, the video of the Palestinians dancing and celebrating spun on endless loop in my head, and contradicted any contrary claims about their religion.

But I am a researcher by nature, and I read. To better understand our joint foe, I read about them: About the origins of Islam and Mohammad, about the Sunni/Shia split, about its early spread, about the European and American mistakes there in the 19th and 20th centuries, about the birth of Wahhabism, about the origins of Israel and the Palestinian crisis, about Bin Laden and the growth of Al Qaida, and about Islam in its present day.

To my chagrin, many of the things that were predicates on which America founded its attacks in the Middle East in the early 2000s were false. There were no weapons of mass destruction in Iraq. Afghanistan was a failed state of guerrilla thugs, not a violent Islamic power bent on world domination. The Iranian revolution was, frankly, more directly America’s fault than it was anything else, and they had every right to be angry over us propping up the Shah.

But still, I viewed Islam with considerable skepticism. Knowing it better raised a lot of questions about it, but it was still an enemy at the gates, and it still had to be stopped. Those 1.6 billion people were all either potential violent attackers or brainwashed accomplices or simply dupes.

I equally distinctly recall the moment my position on Islam changed. Thank you, Mr. Khizr Khan.

I suppose I knew that there were Muslims in the military before Mr. Khan spoke at the Democratic National Convention. I saw the crescents at Arlington National Cemetery. But it didn’t come home quite the same way as it did when he spoke. Here was a husband and wife, devoted parents who had lost their only son. Their son had made the ultimate sacrifice: He had joined the military, gone overseas at his country’s asking, and came back in a box draped with an American flag. And far from being bitter, Mr. Khan was defiant. Their family had offered the ultimate sacrifice for America, and the only thing that Mr. Khan asked, the only thing he insisted on, was respect for the Constitution and what it stood for. Respect for America, for the rights and freedoms and truths it was founded on. Respect for his home — and nothing about his religion.

He was an American first, and a Muslim second.

That moment struck me. Somewhere in the back of my mind, I had always seen Islam as an overriding force: Its adherents were Muslim before all else, and therefore couldn’t really be human first. They couldn’t be humane first. And they certainly couldn’t be American first. But here was Khizr Khan, and he and his wife had sacrificed more for the belief that all men are created equal than anyone I knew had. Here was a man shaking a copy of the Constitution on live TV, and insisting that it was more important than who belonged to which religion, that its insistence of freedoms and rights mattered more than our petty squabbles, that its assurances and guarantees were worth any price. Here was a good man holding up that document I hold so dear and insisting that it was worth the ultimate sacrifice — that he had paid and I had not.

Over the course of the next month, my animosities crumbled. I read and I read and I read and I read, and a decade of hateful walls began to fall down.

Today, I still have some skepticism of Islam. But it’s mostly academic: I have some real, hard questions about its founding, and equally hard questions about its early spread. I have no good answers about the Israel/Palestine mess. And many of the bad things I had formerly attributed to Islam I now rightly attribute to problems in traditional Arabic culture, such as the region’s abysmally poor record on women’s rights.

But all of those are isolated components. 1.6 billion people mostly view Islam as a peaceful, stabilizing force, and I now respect that as it is. There’s a tiny fraction of a percentage of those people that are troublemakers, and while a tiny fraction of a percentage is large in absolute numbers when multiplied by 1.6 billion, it’s still a tiny fraction of a percentage. Christianity is neither better nor worse on its percentages. Most Muslims want the same things I want: To have a home, to have a job, to have a daily meal, to have a spouse, to raise their families, to watch their children learn and grow, to live in a peaceful place, to have meaning in their lives.

Which is a very long and roundabout way of saying that I finally see Muslims for simply what they are: People.

Not evil monsters, not villains, not caricatures, not a violent force to be opposed at all costs, and certainly not the standing army in an impending clash of civilizations: The followers of Islam are people, other human beings, mostly good most of the time just like anyone else, and neither more nor less than that.

It’s a shame it took me so long to get there.

Comments Off on Some thoughts on Islam

Filed under Religion

Live from a future BBC One

June 30, 2017

[begin transcript]

We come to you live from Balmedie, Scotland, where BBC One producers are about to make an announcement.

Trumball: Hello, hello, thank you all for coming. I’m Harold Trumball, the new director of BBC One under Theresa May, and we have a fabulous announcement for you today, just fabulous. With me is Andrew Scotterpyne, the lead producer on the upcoming Doctor Who series. Andy, it’s all yours, take it away.

Scotterpyne: Thank you, Harry. Good afternoon, all. I’m pleased to announce that after an exhaustive search, we’ve found a wonderful new Doctor for 2018. An actor who needs truly no introduction, but we’re so proud we got him that we’re giving him one anyway. You know him from a wide variety of American cinemas, and although it’s the first time we’ve cast an American as the Doctor, he’s a genuinely tremendous pick, and we couldn’t be happier.

Trumball: Absolutely!

Scotterpyne: It’s hard to fill the shoes of such a great actor as Peter Capaldi, but we think we’ve managed to find someone even better, truly even better. Ms. May herself personally wanted to interview him, he’s that good.

Trumball: I jumped when I heard the name!

Scotterpyne: Now I know that the Thirteenth Doctor has a bit of superstition around the number, but we think we’ve found someone far, far bigger than any of that nonsense you’ve seen debated in the press. So without further ado, I present to you your Thirteenth Doctor, Donald J. Trump!

Audience murmurs and a few people are heard shouting

Trumball: I say, I say, you couldn’t have found a better man for the job. We didn’t know what to do — really, how could you top Capaldi? — but then the Americans went and impeached Mr. Trump. Ms. May called me the very same day and demanded we jump at the chance to hire such a big celebrity. Money was no object!

Scotterpyne: Let me say that writing scripts for Mr. Trump will be a highlight of my career. He’s so good that you can give him a blank page and he’ll manage to make an interesting show for three or four hours! But I shouldn’t put words in his mouth; everyone, if you could please put your hands together, please offer a hearty round of applause for Donald J. Trump, your new Thirteenth Doctor!

Trump comes out from behind a curtain

Trump: Thank you, thank you, thank you all, I’m honored. I have to say that when these guys came to me and asked me to be the Doctor, I was like, eh, what’s a Doctor? But then they explained it, and it’s a tremendous, tremendous role, and I’m honored to be chosen for it. We’re gonna have a great series, a great series, it’s gonna be the best series ever.

Someone comes out, whispers something to Trump, and the Secret Service man standing beside him nods

Trump: Anyway, I have to say, this is gonna be great. Let me tell you about this series. We got an all new Tradis —

Scotterpyne: Tardis.

Trump: — Tardis, and it’s fabulous, tremendous. You should see it. It’s the biggest, best, Tardis ever. Yuger on the inside than any Tardis before, just yuge. And classy. None of that tacky plastic crap, or those white frickin’ round things. We got all gold, mahogany, marble floors, completely classy from start to finish. Jacuzzi room off the one side, shrimp lounge, a fully-stocked wine bar, we got Tony Danza to bartend, and he serves up a way, way better Screwdriver than that sonic piece of junk. And dancing girls, none of those dumb levers and dials, just hot dancing chicks on gold poles. You should see it. It’s amazing. We spared no expense.

Scotterpyne: Tell them about the Daleks.

Trump: Oh, yeah, we totally classed up those trash cans. What, they got plungers and eggbeaters for hands? Whose idea was that? We got way better Daleks this time, way better. Solid gold, and scary, like with chainsaws and hand grenades. That’ll put your kids where you want them. Cybermen too, those tin can robots are gone, we got Michael Bay to loan us some of those Transformers of his, and it’s gonna be awesome, just awesome.

Scotterpyne: And don’t forget the companions!

Trump: I was just gettin’ to that. We got the best companions this time. None of those actors you never heard of, no, bein’ all whiny and crap. I got Lou Ferrigno for the one, and he’s just tremendous, just tremendous, a great guy, all around the best you could want, he’s gonna be just clobberin’ bad guys left and right. And we got Sarah Palin playing the rootinest, tootinest sheriff in the old West, along for the ride to meet some aliens and kick some butt. You haven’t ever seen a Doctor What like this.

Trumball: Doctor Who.

Trump: What?

Trumball: Doesn’t matter, carry on.

Scotterpyne: That’s about all we have time for. Mr. Trump has to be off for a round of golf, but before he goes, does anyone have a question for him? We have just time for one question. Mr. Neely, is it? From the Times?

Neely: Yes. Are — you people insane?

Scotterpyne: Why, of course not. Mr. Putin would have us committed if —

Trumball: — uh, that’s all the time we have. I’d like to thank you all for coming, have a delightful Brexit, and I’d like to remind you that whatever you may think of our decisions, however you may consider all this, it’s still far better than letting him start World War III. Thank you, and good day.

[end transcript]

Comments Off on Live from a future BBC One

Filed under Humor